There’s never a dull moment at Red Canary, especially while working on the Incident Handling team. We help customers understand the threats we detect in their environment, assist with the remediation of those threats, and help them understand the security landscape. We also commonly work with customers who have undergone red team engagements and penetration tests to better understand the results and how to action them. This often leads to confusion and questions such as “Why didn’t Red Canary detect this?” This line of questioning is based on false (but reasonable) assumptions that many customers make about their endpoint detection and response or managed detection and response (EDR/MDR) solutions. A better way to think about this might be: could this behavior have been prevented? If not, can this be detected in the future?

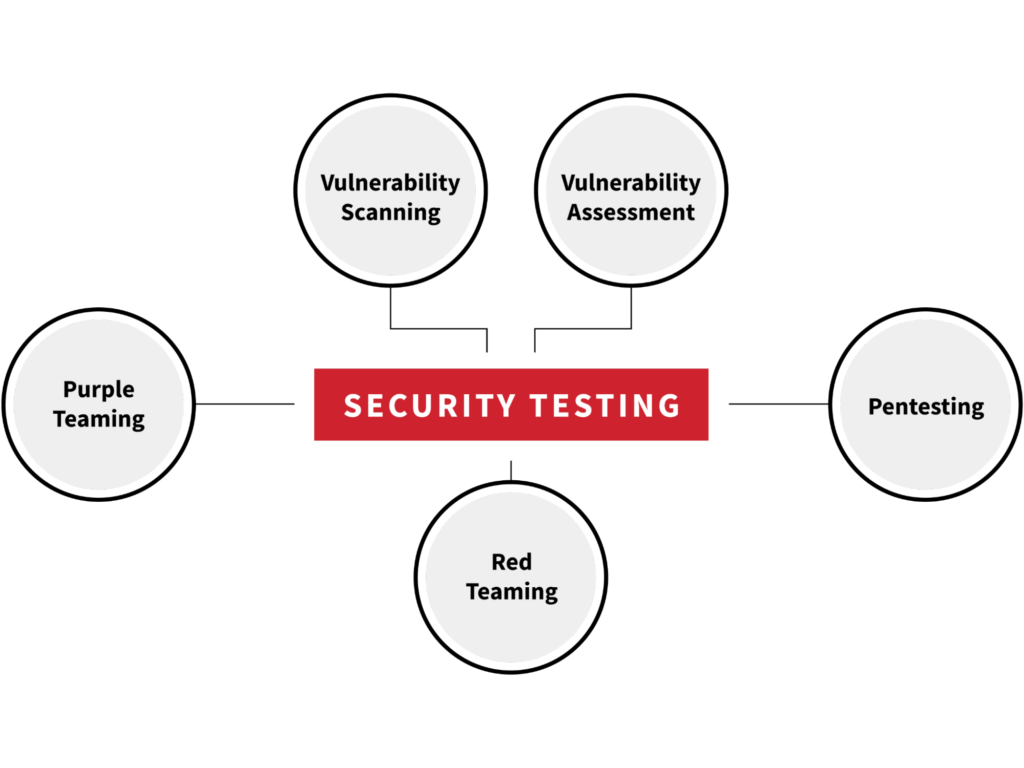

Security testing has come a long way since its inception. The testing methods outlined below won’t necessarily be performed in a linear fashion. Instead, the maturity of an organization’s security program dictates the order in which they’re executed.

Vulnerability scanning/assessment (or, the rise of SATAN)

To address this issue, we have to take a look at security testing from the beginning. This takes us all the way back to the late 90s and the development of the Security Administrator Tool for Analyzing Networks (SATAN). SATAN was one of the first vulnerability scanners that was created to address network vulnerabilities, as more computers became reliant on the internet. Early vulnerability scanners utilized signatures that would look for common network-related problems. Fast forward a couple of years and The MITRE Corporation developed a system called Common Vulnerabilities and Exposures (CVE). This system cataloged every publicly known information security-related vulnerability into one place. As a result, the CVE system was immediately adopted by the community and security vendors who specialized in vulnerability scanning and management.

Today, you can find a multitude of security vendors (Nessus, Qualsys, Tanium, etc.) that specialize in vulnerability scanning. While their products are able to generate nice reports of the vulnerabilities found, it’s equally as important for customers to manually validate the findings listed as well. This involves performing some internal testing if you have the resources available or contracting out such services to a third-party company.

Pentesting

The subsequent iteration of security testing—penetration testing—draws on the community’s desire (read: requirement) to manually validate exploitable vulnerabilities on a given network. A pentest is a security assessment of a computer system. Pentests are often narrow in scope, occurring at specific times of day and affecting a very limited number and type of systems. Exploitation only occurs in a safe manner in order for production systems to remain in a working state so there’s no interruptions to the business.

The tester’s job is to validate if they can exploit a vulnerability or take advantage of a misconfiguration in a system or piece of software. There are many potential reasons why your EDR/MDR product(s) don’t see this activity, including, most importantly, that the test is occurring on a system that isn’t instrumented with EDR or MDR tools. Another related reason that many EDR/MDR products might miss pentest activity is that the type of exploitation being conducted is often network-based, targeting weaker legacy network protocols that remain prevalent in Windows environments like WPAD, LLMNR, and NetBIOS.

In short, pentesting is about identifying a weakness or misconfiguration on a given system, component, or network device and patching, reconfiguring, or otherwise hardening the system in question. This type of testing is primarily focused on remediation in the form of prevention controls.

Red teaming

Red team engagements take pentesting one step further by increasing the length of testing, usually to four-to-six weeks (sometimes longer). A longer duration is often recommended since this mimics how real threat actors operate in the wild.

Rather than looking for vulnerabilities in a specific piece of software or system, a red team is a holistic or practical assessment of the security of everything on a network. In other words, the pen test is about depth and the red team is about breadth. This type of testing allows attempts to emulate real-life adversary behaviors to create a more realistic type of attack scenario, going past prevention mechanisms and testing an organization’s comprehensive ability to detect and respond to malicious activity. Adversary emulation doesn’t mean you have to emulate the tactics, techniques and procedures (TTP) of an APT group either. It’s most likely more beneficial to the organization to have the red team emulate commodity malware like Qbot, Emotet, Zloader, etc. These threats are much more common within environments since APT groups oftentimes have a niche focus and a specific objective to fulfill. As a result, the chances of a business being affected by common malware threats are much higher than their chances of being affected by an APT group.

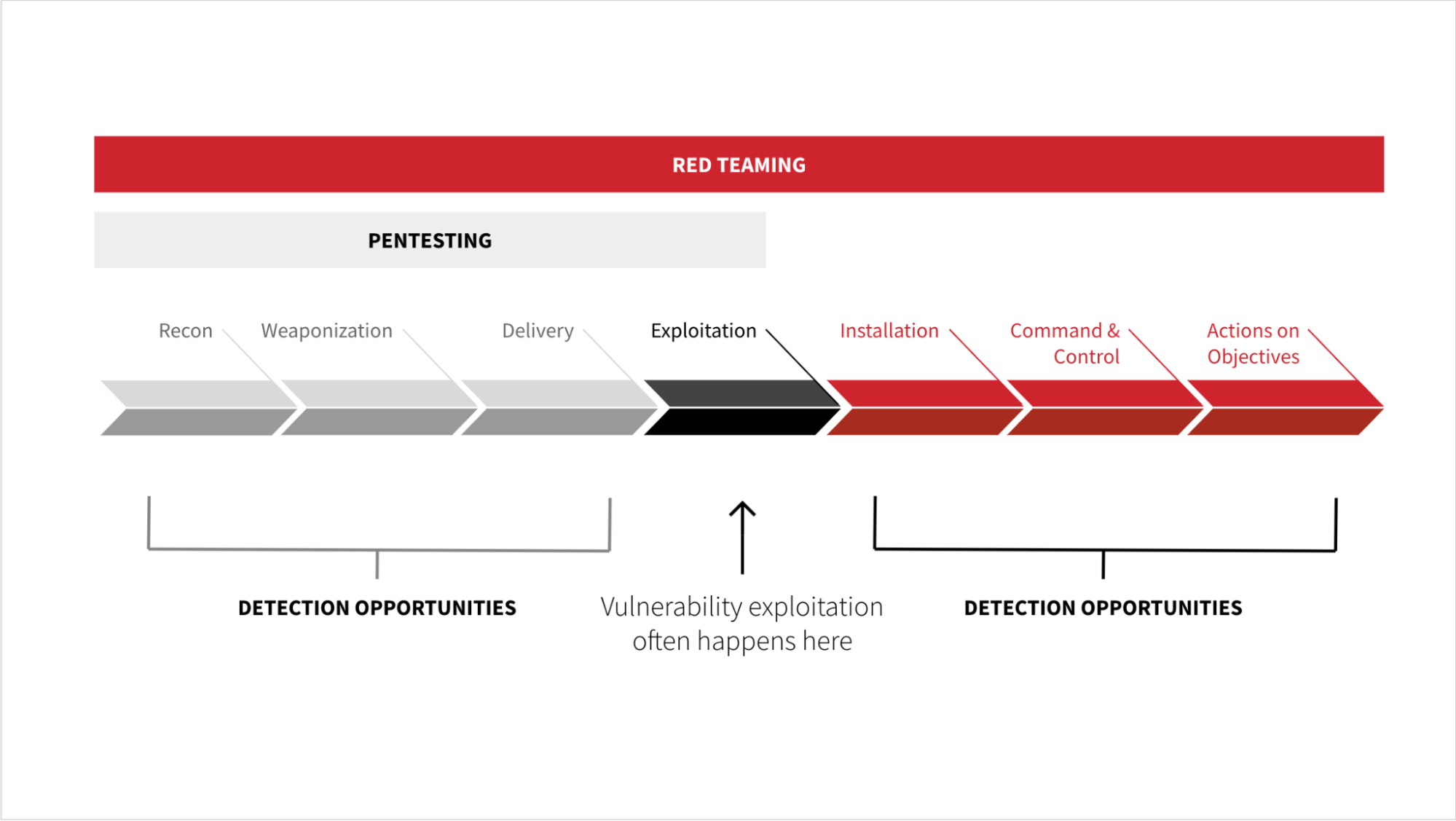

Additionally, during testing, a red team might exploit one vulnerability, many, or none at all. This is a big distinction from pentesting, where the tester is trying to exploit all of the vulnerabilities that might affect the software or system they’re testing. Another important difference when it comes to red teaming is that it also seeks to demonstrate—from a behavioral perspective—what type of activity precedes the vulnerability exploitation and what comes after during the post-exploitation phase. The graphic below, originally presented by our Director of Intelligence Katie Nickels, has been adapted to illustrate the nuances between pentesting and red teaming as it relates to detection opportunities with the Cyber Kill Chain.

These actions will often generate behaviors that we can observe with process telemetry. If you don’t have EDR, Sysmon is a great, free alternative collection source where you can observe this activity as well.

Ultimately, red teaming drives the business to focus on their ability to detect and respond to threats in a timely manner. Remediation looks at where preventative controls fail and discover where detection opportunities are. People and processes are also scrutinized and should be adjusted as needed.

The pen test is about depth and the red team is about breadth.

Purple teaming

Lately, purple team engagements are becoming more popular. This type of security testing is highly collaborative between both red and blue teams in order to test an organization’s capabilities during a malicious attack. The red team will perform an attack and will instruct the blue team—the defenders of a network—to go and find what they did with the data sources available to them. The blue team is evaluated on how well they prevented, logged, alerted, detected, and responded to each TTP executed. Once testing has concluded, both teams will meet to discuss what was detected and what was missed in order to fill in any gaps in coverage. The Purple Team Exercise Framework (PTEF) created by Scythe is a great resource on how to conduct a purple team engagement. Perhaps the most difficult aspect of this type of testing is deciding where to start. You can choose to dive in by way of a technique or newly discovered vulnerability or you can approach it from a tactic perspective in which you may seek to identify the various avenues of initial access (e.g., phishing, remote services, etc). The decision ultimately comes down to the team’s security goals and defined deliverables. Red Canary’s annual Threat Detection Report can help you prioritize which techniques and threats to focus your resources on.

What to expect when you’re detecting

Armed with the knowledge we’ve just learned regarding the various subsets of testing, let’s circle back to detections for a moment and ponder the following: When performing different kinds of security testing, should you expect the same detection outcome? For example, will you detect a vulnerability scan the same way you would detect a red team engagement? The short answer: no. This is largely due to the different use cases each type of testing serves within the business.

The results of a vulnerability scan or pentest usually serve system owners, developers, and security engineers who have responsibility for remediating vulnerabilities. It’s up to them to remediate in the form of a patch or reconfiguration in order to reduce the chance of exploitation, which ultimately lowers the risk an organization faces. On the other hand, red and purple teaming are largely about what TTPs you can and cannot detect. You’re trying to determine if there are any deficiencies within your security stack, people, or processes—and therefore you should expect to see relevant telemetry from your EDR tool and/or threat detection from your MDR provider when you’re performing a red or purple team engagement.

One step closer to defense in depth

Security testing has evolved so much in the last few decades, and with that, so should our mindsets. Initially, security assessments started with identifying CVEs within the environment. Now, we must think about how we can accurately identify TTPs. Instead of thinking about how many things we can prevent, we should be asking ourselves what we can and should detect as well. Transitioning to a detection and response mindset can be difficult since it requires more resources to manage and maintain. You will need to collect logs from different data sources, create detectors for interesting activity, staff people to triage and investigate those detection alerts, and employ others to remediate and properly remove the threat from the environment.

As you think about how testing has evolved over time, it’s important to keep in mind that testing is simply a mechanism by which security gaps are exposed before they can be exploited by an adversary. No one type of test reigns supreme. Each test is as important as the next, so long as it helps the business reduce risk. By adopting a holistic approach to testing, your company will be able to safeguard its network and improve the efficacy of detection and prevention controls—and its follow-on response—for good.